This is Part 5 of 7 from The Capital Efficiency Paradox Series.

← Part 4: $230M to $1B in Four Months | Part 6: Capital Efficiency Through Government-to-Commercial Path →[🧠] Sapien Fusion Deep Dive | February 16, 2026

Joelle Pineau’s Capital-Efficient AI Research Infrastructure

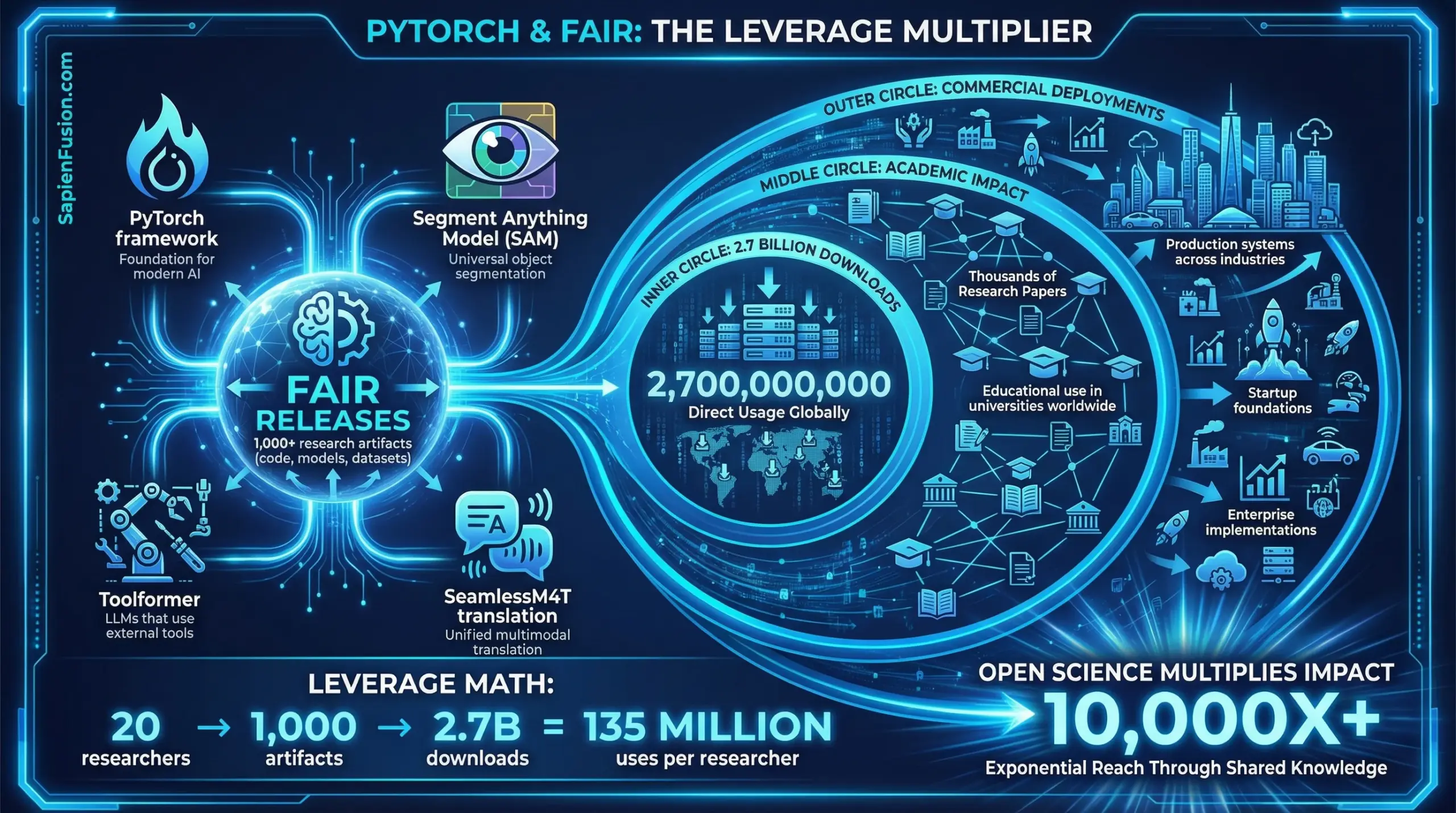

In 2017, Joelle Pineau joined Meta to establish FAIR’s Montreal laboratory with 20-30 researchers. By 2025, when she left to become Chief AI Officer at Cohere, that research organization had released over 1,000 research artifacts—code, models, datasets—downloaded 2.7 billion times globally.

That’s 90 million+ leveraged uses per initial researcher. Not through closed productization. Through strategic open science that multiplied impact without multiplying headcount.

Now at Cohere, which raised $500 million at a $6.8 billion valuation in August 2025, Pineau is applying the same capital efficiency principles to enterprise AI: build systems optimized for deployment constraints, not benchmark dominance. Focus on what enterprises actually use, not maximum capability.

This is capital efficiency as strategic clarity about where value actually compounds.

The Bleeding Edge Problem

Most AI labs pursue a singular metric: model capability. Bigger models. Better benchmarks. Maximum performance.

Pineau identified a different pattern during her final 18 months at Meta, working directly with Mark Zuckerberg on company-wide AI strategy: there’s a “capability overhang”—a gap between what current AI systems can accomplish and what’s actually deployed.

The gap exists for three reasons. Efficiency considerations drive enterprises to deploy smaller models to reduce computational costs and latency, even when larger models would perform better. Organizational misalignment means processes and infrastructure don’t match what AI agents can accomplish. Intelligence encoding gaps leave valuable knowledge unencoded in systems AI agents can access.

“While customers may have the capacity to benefit from advanced AI capabilities,” Pineau observed, “they often rationally choose to deploy systems that provide ‘good enough’ performance for specific business problems rather than maximum performance.”

This insight—that the frontier isn’t maximum capability but effective deployment within constraints—defines her approach to capital-efficient AI.

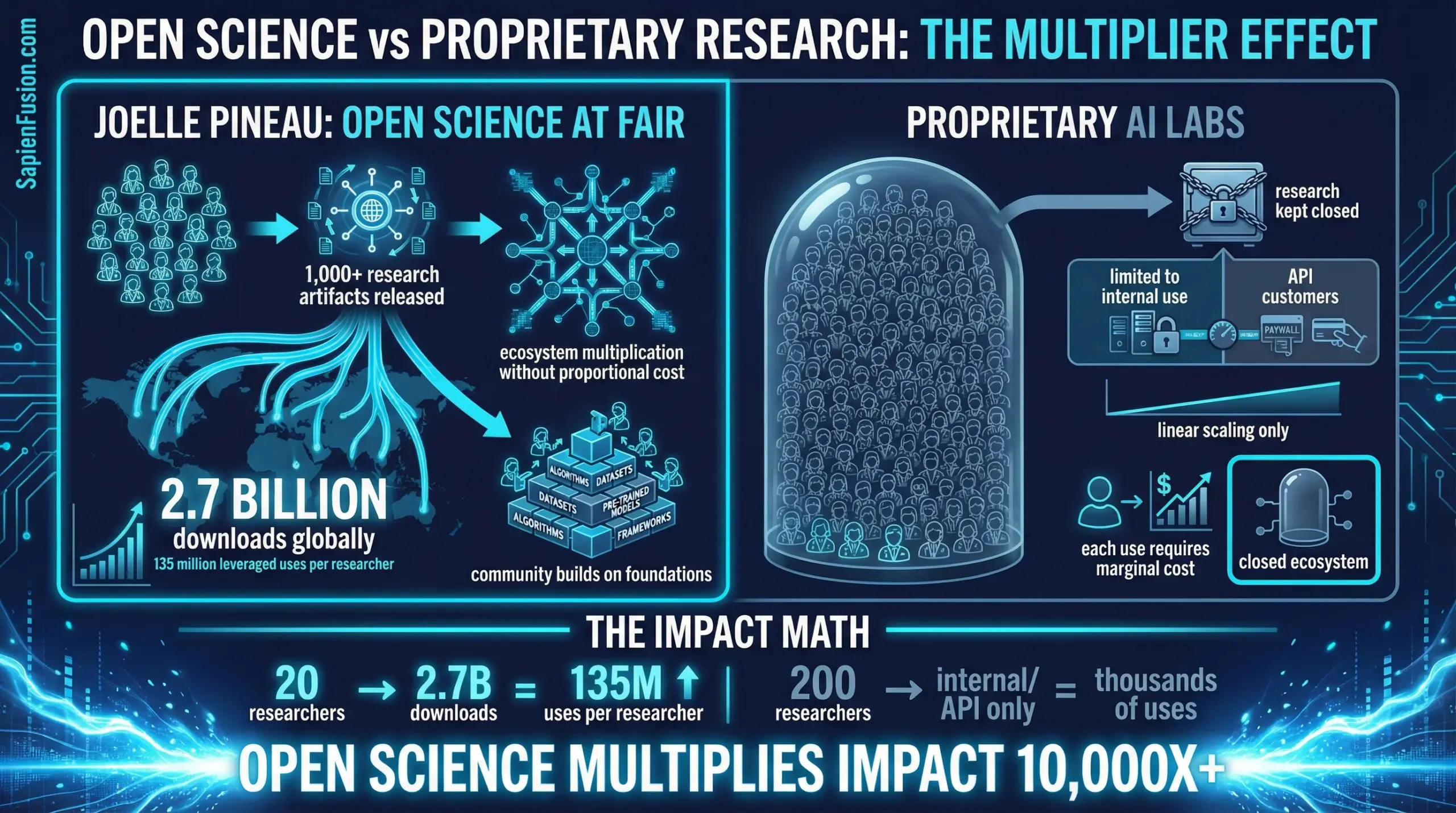

The Open Science Multiplier

FAIR’s capital efficiency under Pineau’s leadership derived from a deliberate strategic choice: release research publicly rather than keeping it proprietary.

The numbers validate the approach. Over 1,000 research artifacts were released including code, models, and datasets, achieving 2.7 billion total downloads globally. Major releases included Segment Anything Model (SAM), SeamlessM4T for multilingual translation, and Toolformer for tool-using LLMs.

Compare this to closed competitors. OpenAI and Anthropic keep most research proprietary. Impact is limited to internal use plus whatever customers pay for API access. FAIR’s approach: invest once, leverage globally, multiply impact without proportional cost increase.

Pineau defended this choice during competitive pressure to close research: “Making code, data, and scientific communications publicly available increases transparency and allows the broader research community to quickly integrate new findings and convert ideas into practice.”

That’s not altruism. It’s capital efficiency through ecosystem leverage.

The Reproducibility Infrastructure Play

While leading FAIR, Pineau pioneered another capital-efficient innovation: the Machine Learning Reproducibility Checklist.

As inaugural Reproducibility Chair for NeurIPS 2019, she introduced comprehensive reproducibility requirements including code submission policy where authors make implementations available for review, a community reproducibility challenge enabling independent verification of published claims, and the ML Reproducibility Checklist providing structured guidance for essential documentation.

The checklist addresses dataset documentation covering statistics, splits, and access procedures. It specifies model documentation including complexity, dependencies, and hyperparameters. Experimental results require documenting the number of runs, measures of central tendency, and variation. Theoretical claims must include complete proofs with all assumptions stated.

Impact: Authors using the checklist gave higher scores to their own papers, suggesting structured reflection improved quality. The checklist, now at version 2.0+, remains standard for ML paper submissions globally. A first reproducibility ML conference was held summer 2025 in Princeton.

This is capital efficiency through infrastructure: invest in standards once, reduce wasted effort globally, compound quality improvements across entire field.

The Enterprise Deployment Thesis at Cohere

Pineau joined Cohere in August 2025 specifically because the company’s positioning aligned with her insights about the capability overhang.

Cohere’s differentiation centers on data privacy, offering AI models deployable on any cloud provider or on-premises, including air-gapped environments isolated from internet. The platform is cloud-agnostic, supporting Google Cloud, Microsoft Azure, and Oracle Cloud without lock-in. Models are efficiency-optimized, designed for deployment on customer infrastructure while prioritizing cost-effectiveness over maximum capability. The North platform provides security-first agentic capabilities designed for enterprise deployment in private environments.

The strategic logic: enterprises in regulated industries including financial services, healthcare, and government can’t send data to cloud APIs. They need “bring the model to your data” rather than “send data to model.”

Pineau’s role as Chief AI Officer: oversee AI strategy across research, product, and policy teams. Advance systems that work within enterprise constraints rather than demanding enterprises adapt to AI requirements.

Revenue model: $500M raised, $6.8B valuation. Investors include Radical Ventures, Inovia Capital, AMD Ventures, NVIDIA, Salesforce Ventures, Healthcare of Ontario Pension Plan.

That’s capital efficiency as product-market fit: solve the deployment problem enterprises actually have, not the capability ceiling researchers prefer.

The Theoretical Foundation: POMDPs and Sample Efficiency

Pineau’s capital efficiency thinking is grounded in fundamental research on learning under uncertainty.

Her foundational contribution: Point-Based Value Iteration (PBVI) algorithm, developed during her master’s research at Carnegie Mellon. The algorithm addresses Partially Observable Markov Decision Processes (POMDPs)—situations where agents must make decisions without complete information.

PBVI achieves computational efficiency by operating on selected belief points rather than exhaustively exploring entire belief space. This reduces computational resources required for complex planning.

The paper has been cited nearly 1,000 times. That’s capital efficiency at the algorithmic level: reduce sample complexity, improve learning efficiency, enable practical deployment.

Her broader research program emphasizes reinforcement learning algorithms with improved sample efficiency, learning from fewer interactions with environment. She developed interpretable policies through the HyperCombinator architecture, which expresses policies using controllably small numbers of sub-policies while maintaining explainability. Her work on hierarchical reasoning builds systems capable of planning at multiple temporal scales from immediate reactions to long-term strategy.

This is capital efficiency through algorithmic innovation: reduce data requirements, computational cost, and deployment complexity simultaneously.

The Leadership Composition: Scale Without Sprawl

Pineau’s leadership style emphasizes leveraging advisory expertise rather than building massive teams.

At McGill, she co-directed the Reasoning and Learning Lab, pursuing application-oriented work including SmartWheeler intelligent wheelchair using laser range finders, gesture recognition, and multi-modal control. The Nursebot initiative developed robotic assistants for elderly and disabled populations. Medical AI applications focused on seizure detection and personalized medicine.

At FAIR Montreal, she started with 20-30 researchers and grew to leading the entire global FAIR organization across North America and Europe. Rather than linear headcount scaling, she focused on research quality and open release strategy.

At Cohere: Chief AI Officer leading research direction without requiring 500-person organization. The model: world-class researcher-leaders multiplying impact through open ecosystems rather than proportional team growth.

This is capital efficiency through leverage: research quality × open distribution × ecosystem adoption = impact far exceeding internal headcount.

The Academic-Industry Bridge

Pineau maintains her position as Associate Professor at McGill University’s School of Computer Science while serving as Cohere’s Chief AI Officer. This isn’t time-splitting inefficiency—it’s strategic positioning.

The academic connection provides talent pipeline from McGill and Quebec AI ecosystem, research credibility signaling for enterprise customers requiring scientific rigor, publication venues maintaining thought leadership, and long-term research directions beyond quarterly product cycles.

Combined with roles as CIFAR Chair in AI and Fellow of the Royal Society of Canada, this positions Pineau at intersection of fundamental research and enterprise deployment—precisely where capital-efficient innovation occurs.

Strategic Implications

Pineau’s trajectory illuminates a capital efficiency playbook applicable beyond AI research.

Open ecosystems multiply impact without proportional cost. FAIR’s 1,000+ releases achieving 2.7 billion downloads demonstrate leverage impossible through closed approaches. Each download represents value created without marginal cost.

Infrastructure investments compound globally. The ML Reproducibility Checklist improves quality across entire field with single upfront effort. Standards reduce duplicated work across thousands of researchers.

Deployment constraints matter more than capability ceilings. Enterprises need systems that work within their constraints, not maximum-performance systems requiring infrastructure overhaul. Capital efficiency comes from solving actual deployment problems.

Research leverage through team quality beats linear scaling. Twenty elite researchers releasing open tools generate more impact than 200 producing proprietary systems. Quality × distribution > quantity × control.

Academic-industry positioning captures both fundamental advances and practical deployment knowledge. Pure research misses deployment constraints. Pure industry loses fundamental innovation. The bridge position accesses both.

Pattern Recognition Across Career

Pineau’s career demonstrates consistent strategic choices prioritizing leverage over linear growth.

From 2004 to present at McGill, she built research programs emphasizing practical deployability rather than pure capability. During 2017-2025 at Meta FAIR, she led transition from closed to open research, multiplying impact through ecosystem leverage. From 2019 forward as Reproducibility Chair, she established infrastructure improving research quality globally. In 2025 at Cohere, she’s applying deployment-focused thinking to enterprise AI.

Each transition represents choosing systemic leverage over local optimization. From open science to reproducibility standards to enterprise deployment focus, that’s capital efficiency as consistently identifying where effort compounds rather than depletes.

Current Position and Recognition

Pineau currently serves as Chief AI Officer at Cohere, where she oversees AI strategy across research, product, and policy. She maintains her position as Associate Professor at McGill University’s School of Computer Science and holds the CIFAR Chair in AI. She’s a Fellow of the Royal Society of Canada and has received recognition including the NSERC Steacie Fellowship, Governor General’s Innovation Award, and multiple best paper awards at major conferences.

Her work bridges fundamental research in reinforcement learning with practical deployment in enterprise environments, maintaining focus on what makes AI systems usable rather than just capable.

From 20 researchers to 2.7 billion downloads to enterprise deployment architecture: that’s capital efficiency as strategic clarity about where value multiplies versus where it merely accumulates.

Learn More About Joelle Pineau

Professional Profiles:

- LinkedIn – Connect professionally

- McGill Faculty Page – Academic profile and research

- Wikipedia – Biography and career overview

Current Positions:

- Chief AI Officer at Cohere

- Associate Professor at McGill University School of Computer Science

- CIFAR Chair in AI

Recognition:

- NSERC Steacie Fellowship (prestigious Canadian research award)

- Governor General’s Innovation Award

- Fellow of the Royal Society of Canada

- CIFAR Chair in AI

Research Contributions:

- ML Reproducibility Checklist – Standard for ML research documentation

- Point-Based Value Iteration – Foundational POMDP algorithm

- Google Scholar – Publications and citations

Company Resources:

- Cohere – Enterprise AI platform

- Cohere North Platform – Security-first agentic AI

Media Coverage:

This is Part 1 of 7 from The Capital Efficiency Paradox

All parts in this series:

- Part 1: Capital Efficiency Through Humanoid Telepresence

- Part 2: Building the First Longitudinal Women’s Health Dataset

- Part 3: $800M to Rebuild Drug Discovery

- Part 4: $230M to $1B in Four Months

- Part 5: From 20 Researchers to 2.7 Billion Downloads

- Part 6: Capital Efficiency Through Government-to-Commercial Path

- Part 7: The Capital Efficiency Playbook

- Research Methodology and Source Verification for the Capitol Efficiency Paradox Series