This is Part 4 of 7 from The Capital Efficiency Paradox Series.

← Part 3: $800M to Rebuild Drug Discovery | Part 5: From 20 Researchers to 2.7 Billion Downloads→

[🧠] Sapien Fusion Deep Dive | February 16, 2026

Fei-Fei Li’s Spatial Intelligence Inflection Point

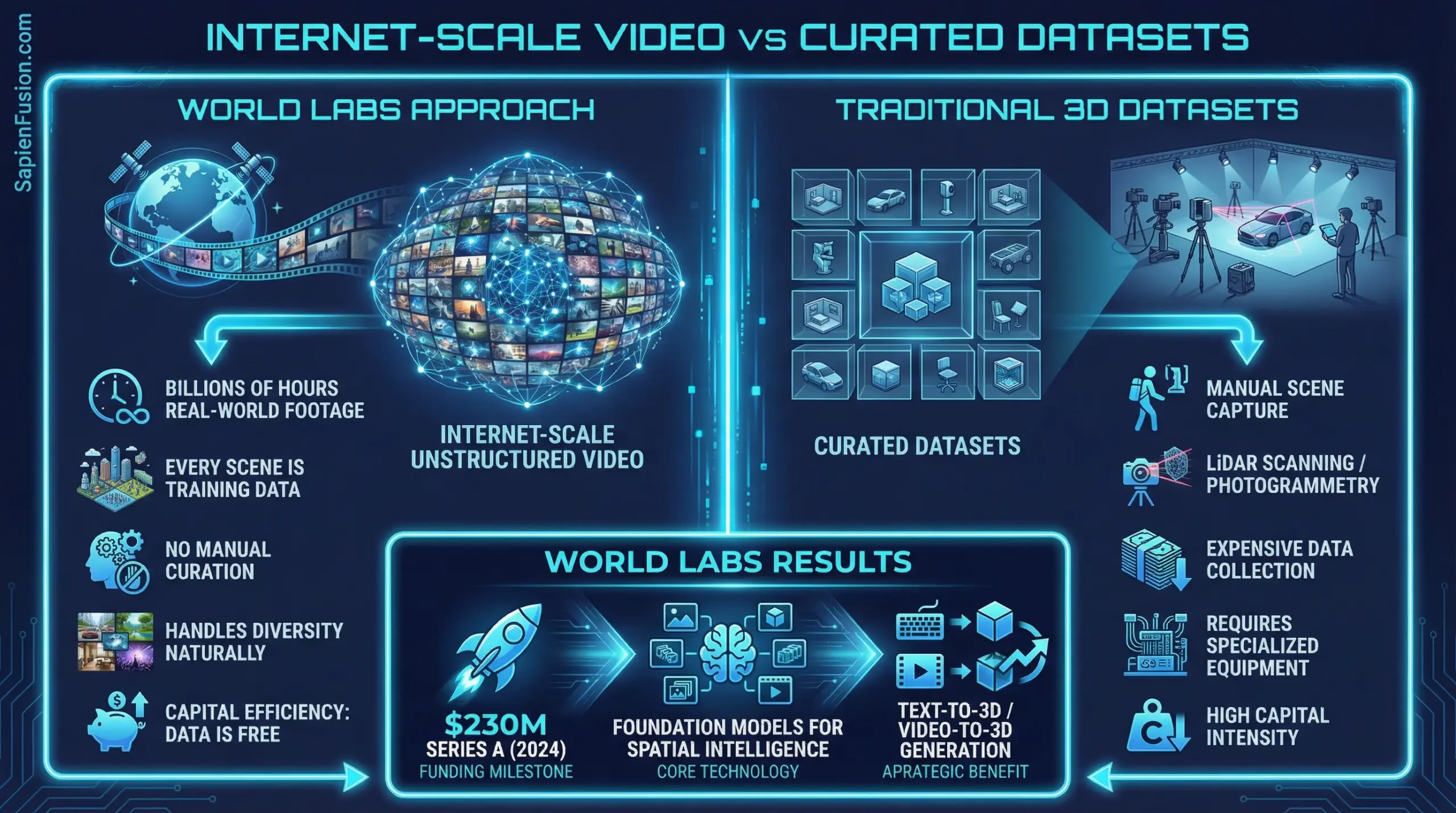

January 2024: Fei-Fei Li and three world-class collaborators founded World Labs to pursue spatial intelligence—AI systems that understand and generate 3D worlds, not just 2D images.

September 2024: Eight months later, valuation exceeded $1 billion after $230M raised from Andreessen Horowitz, NEA, and Radical Ventures.

November 2025: Marble launched—the first commercially available large world model generating persistent, navigable 3D environments from text, images, or video.

January 2026: World API announced—programmable spatial intelligence becomes infrastructure.

That’s not iterative improvement. That’s correctly identifying the next frontier before it’s obvious and compressing the typical startup development cycle by 9-12 months through strategic clarity about what matters.

The Bleeding Edge Pattern Recognition

Most AI labs pursued incremental improvements on text and 2D image generation. Fei-Fei Li recognized something different during her 2017-2018 tenure as VP and Chief Scientist of AI/ML at Google Cloud: AI systems operated in a fundamentally two-dimensional paradigm, processing text tokens and image pixels without genuine spatial understanding.

“Human spatial intelligence represents one of the most fundamental and evolutionarily ancient dimensions of human cognition, developed over millions of years of natural selection,” she observed.

The gap wasn’t about model size. It was about dimensionality. LLMs and image generators, no matter how sophisticated, couldn’t reason about or interact with three-dimensional space the way humans do naturally.

While competitors chased larger language models and better image quality, Li identified the capability frontier: spatial intelligence as infrastructure for embodied AI, robotics, autonomous systems, and any application requiring genuine 3D understanding.

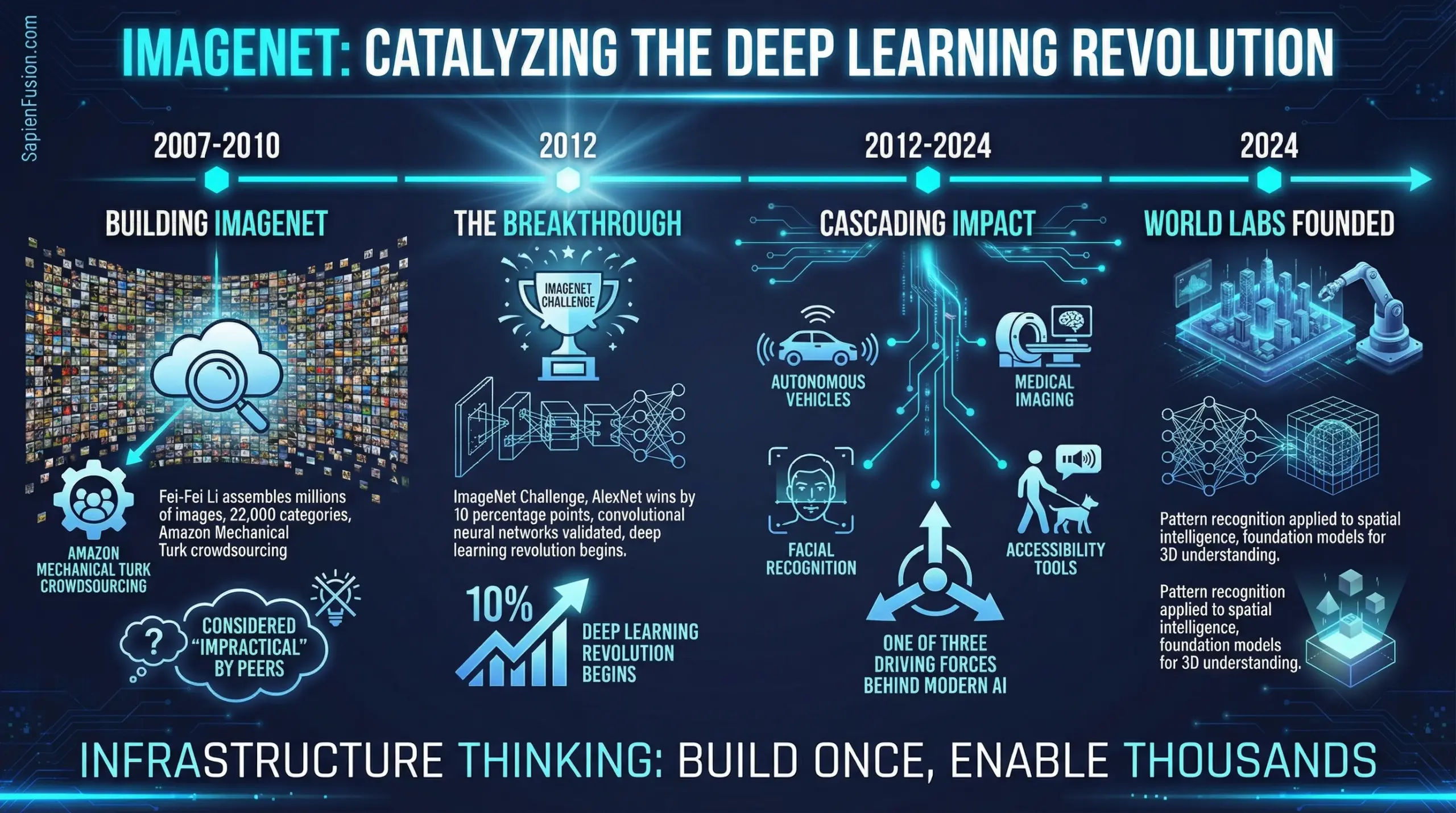

The ImageNet Foundation

Li’s pattern recognition on spatial intelligence wasn’t speculation. It was grounded in successfully identifying a similar inflection point two decades earlier.

Between 2007-2010 at Princeton and Stanford, while colleagues pursued incremental algorithm improvements, Li built ImageNet—assembling millions of images across 22,000 categories using Amazon Mechanical Turk crowdsourcing when most considered the scale “impractical.”

The 2012 ImageNet Large Scale Visual Recognition Challenge vindicated her thesis: AlexNet’s convolutional neural network won by approximately 10 percentage points over all competitors, signaling the beginning of the deep learning revolution.

Today, ImageNet is credited as one of three driving forces behind modern AI, alongside architectural advances and powerful computing. The dataset enabled breakthroughs in autonomous vehicles, medical imaging, facial recognition, and

The Founding Team Composition

World Labs’ founding team represents concentrated expertise in precisely the areas necessary for spatial intelligence.

Fei-Fei Li brings her experience as ImageNet creator, Stanford AI Lab director from 2013-2018, Stanford HAI co-director, and former Google Cloud VP and Chief Scientist. Justin Johnson, a computer vision expert who studied under Li, contributed postdoctoral research at Facebook AI Research and influential papers on visual reasoning and neural style transfer. Christoph Lassner, former Meta Reality Labs Research leader, provides expertise in 3D rendering and radiance field reconstruction, having developed the Pulsar renderer. Ben Mildenhall, co-inventor of Neural Radiance Fields or NeRF, brings the foundational technique for 3D scene representation and novel view synthesis.

This isn’t a broad “AI expertise” team. It’s surgical precision: the person who catalyzed the computer vision revolution plus the three people who pioneered the specific technical foundations—NeRF, 3D rendering, visual reasoning—required for spatial intelligence.

Team size as of early 2026: 20-30 world-class researchers and engineers. Not 500. Not 5,000. Lean, focused, expert.

The Compressed Development Timeline

The company founded in January 2024, raised a $100M seed round by June 2024, then closed a $130M Series A extension in September 2024 reaching $230M total and surpassing $1B valuation. Marble entered limited beta preview in September 2025, launched general availability with multimodal capabilities in November 2025, then introduced World API in January 2026 establishing spatial intelligence as programmable infrastructure.

That’s concept to commercially available platform in less than two years. Most AI startups spend 2-3 years in development before launching anything customers can use.

The compression came from building on prior technical foundations like NeRF research, radiance field rendering, and 3D Gaussian splatting rather than developing from scratch. The founding team’s reputation provided immediate access to top-tier talent recruitment without extended hiring searches. Market clarity focused development on specific applications including creative tools, robotics training, and architectural visualization rather than exploring numerous use cases. Technical risk acceptance meant launching beta while continuing to improve, gathering real-world feedback rather than pursuing laboratory perfection.

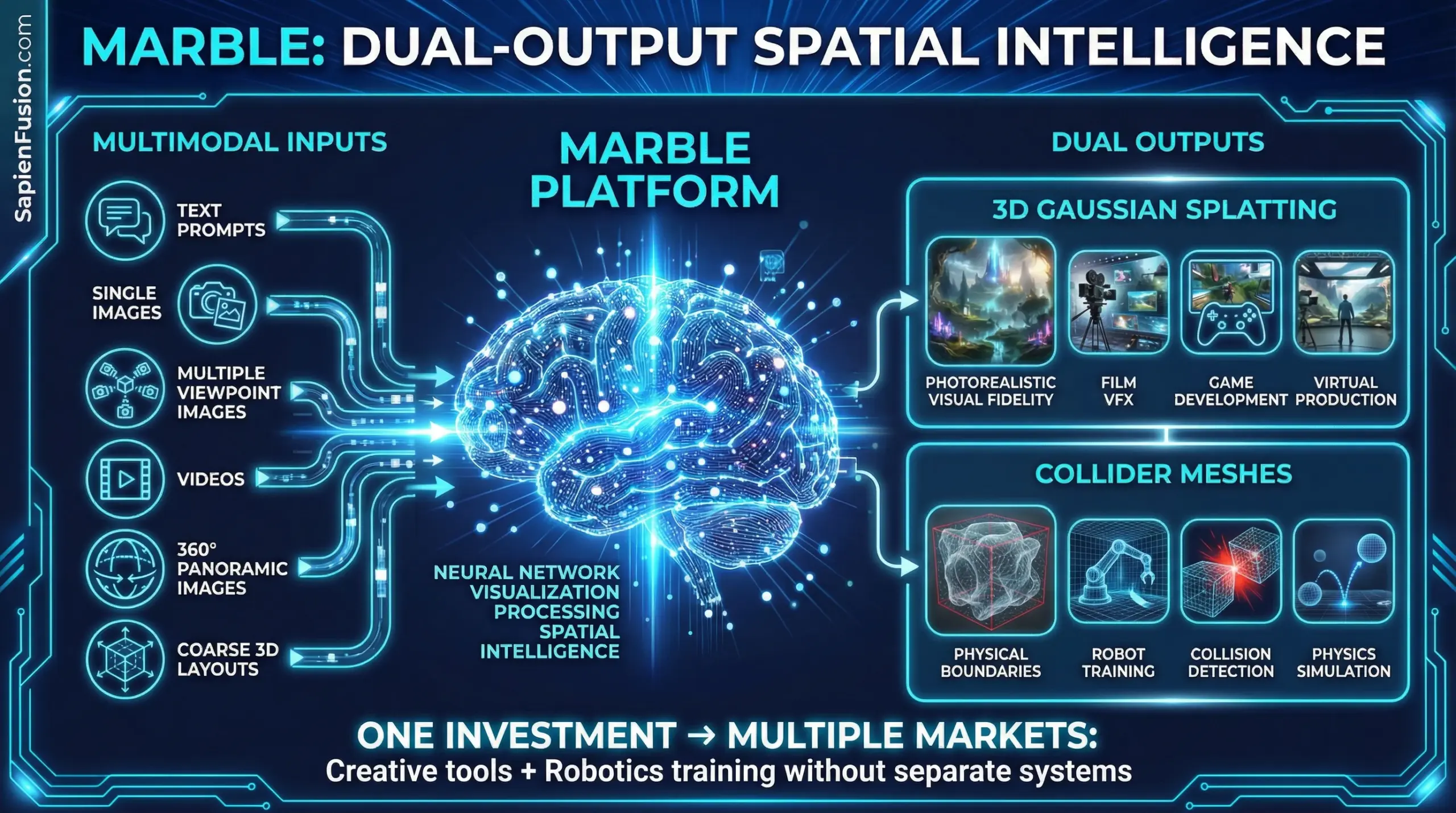

The Technical Architecture: Dual-Output Spatial Intelligence

Marble’s capital efficiency derives partly from its dual-output architecture solving two problems simultaneously.

The platform generates 3D Gaussian Splatting for photorealistic visual fidelity through efficient rendering of 3D Gaussian distributions. Simultaneously, it produces collider meshes—low-fidelity polygon meshes defining physical boundaries and interactive properties.

This means Marble generates environments with both visual realism AND physical accuracy, usable for human-facing creative tools like film VFX, game development, and virtual production, plus physics-based robot simulations requiring training environments with correct collision detection.

Single investment in spatial intelligence infrastructure serves multiple markets without building separate systems.

The Multimodal Input Strategy

Marble accepts diverse inputs including text prompts, single images, multiple images from different viewpoints, videos, 360-degree panoramic images, and coarse 3D layouts.

This multimodal approach recognizes different creative and technical contexts require different forms of input. An artist might begin with text; a filmmaker provides reference footage; an architect sketches rough geometric layouts.

The capital efficiency: one platform handles all input modalities rather than building separate tools for each workflow. Development investment compounds across use cases.

The World API: Spatial Intelligence as Infrastructure

January 2026’s World API announcement represents the strategic endgame: spatial intelligence transitioning from product to infrastructure layer.

The API provides programmable access to Marble’s capabilities, enabling developers to generate, manipulate, and query 3D environments programmatically. This positions World Labs as infrastructure provider similar to how cloud computing providers offer computing resources, storage, and specialized services.

The implications extend beyond creative applications. Robotics companies can generate unlimited synthetic training environments. Autonomous vehicle developers can create edge-case scenarios for testing. Architectural firms can rapidly prototype spatial designs. Game developers can procedurally generate content.

World Labs captures value not just from direct Marble users but from every downstream application built on World API. That’s platform economics: enable others to build value on top of your infrastructure, capturing percentage of expanded market.

Strategic Positioning Across Three Horizons

World Labs operates simultaneously across three strategic horizons, each with different business models and timelines.

Immediate horizon focuses on creative professionals through Marble subscriptions. Tier pricing starts with basic access at $15 monthly or $120 annually for enthusiasts and students, professional tier at $120 monthly or $960 annually offering expanded capabilities, and enterprise tier providing custom pricing with dedicated support. This generates immediate revenue while validating product-market fit.

Medium-term horizon targets robotics and embodied AI through World API. Companies training robotic systems require vast quantities of diverse, physically accurate 3D environments. Generating these environments manually remains prohibitively expensive. World Labs provides programmatic generation at scale, positioning as essential infrastructure for the robotics industry.

Long-term horizon positions spatial intelligence as foundational AI capability. Just as LLMs became infrastructure for language processing and diffusion models for image generation, World Labs aims to establish spatial intelligence as the standard for 3D understanding and generation.

This three-horizon strategy means the company generates revenue today through creative tools, builds moats through API adoption for robotics, and positions for foundational infrastructure status as spatial intelligence becomes ubiquitous.

The Academic-Industry Bridge

Li maintains her Stanford position as Sequoia Professor in Computer Science and co-director of Stanford’s Human-Centered AI Institute while founding World Labs. This isn’t a distraction—it’s strategic positioning.

The academic connection provides talent pipeline from Stanford’s AI program, credibility signal for recruiting world-class researchers, research collaboration access enabling faster iteration on core algorithms, and long-term thinking beyond quarterly metrics preserving focus on fundamental breakthroughs.

World Labs demonstrates that academic expertise and commercial execution aren’t opposing forces. They’re complementary capabilities when properly integrated.

Pattern Recognition Across Career

Li’s career shows consistent pattern recognition identifying infrastructure-level opportunities before consensus forms.

During 2007-2010, she built ImageNet when others pursued algorithmic tweaks, creating the dataset that enabled the deep learning revolution. From 2013-2018 as Stanford AI Lab director, she established research agenda around human-centered AI when most focused purely on capabilities. During 2017-2018 at Google Cloud, she recognized enterprise AI adoption patterns while positioned inside one of tech’s largest companies. In 2024, she founded World Labs targeting spatial intelligence as the next AI frontier while others focused on LLM scale.

Each transition represents identifying where infrastructure investment compounds over time rather than chasing immediate returns. From ImageNet to spatial intelligence, that’s capital efficiency as strategic patience about what matters long-term.

Current Position and Recognition

Li currently serves as Sequoia Professor of Computer Science at Stanford University, Co-Director of Stanford’s Human-Centered AI Institute, and Founder and CEO of World Labs. Her recognition includes election to the National Academy of Engineering in 2017, National Academy of Medicine in 2020, and American Academy of Arts and Sciences in 2020. She received the inaugural AAAI Squirrel AI Award for AI for the Benefit of Humanity in 2019 and joined the UN Secretary-General’s Scientific Advisory Board in 2024.

TIME Magazine named her one of the “100 Most Influential People in AI” in both 2023 and 2024, recognizing sustained impact across computer vision, AI ethics, and now spatial intelligence.

The convergence: decades of computer vision research, proven track record catalyzing industry transformations, academic credibility, and operational expertise executing fast startup timelines. World Labs isn’t just another AI company—it’s the synthesis of Li’s infrastructure-level pattern recognition applied to what she identified as AI’s next dimensional frontier.

Learn More About Fei-Fei Li

Professional Profiles:

- LinkedIn – Connect professionally

- Stanford Faculty Page – Academic profile and research

- Wikipedia – Biography and career overview

Current Positions:

- Founder and CEO at World Labs

- Sequoia Professor of Computer Science at Stanford University

- Co-Director of Stanford HAI (Human-Centered AI Institute)

Recognition:

- National Academy of Engineering (2017)

- American Academy of Arts and Sciences (2020)

- UN Secretary-General’s Scientific Advisory Board (2024)

- AAAI Squirrel AI Award for AI for the Benefit of Humanity (2019)

- TIME “100 Most Influential People in AI” (2023, 2024)

Company Resources:

- World Labs – Spatial intelligence platform

- Marble World Model – Large world model announcement

- World API – Programmable spatial intelligence infrastructure

Research Contributions:

- ImageNet – Visual database that catalyzed deep learning revolution

- Stanford AI Lab – Former director (2013-2018)

- Google Scholar – Publications and citations

Media Coverage:

This is Part 1 of 7 from The Capital Efficiency Paradox

All parts in this series:

- Part 1: Capital Efficiency Through Humanoid Telepresence

- Part 2: Building the First Longitudinal Women’s Health Dataset

- Part 3: $800M to Rebuild Drug Discovery

- Part 4: $230M to $1B in Four Months

- Part 5: From 20 Researchers to 2.7 Billion Downloads

- Part 6: Capital Efficiency Through Government-to-Commercial Path

- Part 7: The Capital Efficiency Playbook

- Research Methodology and Source Verification for the Capitol Efficiency Paradox Series